NetSuite Data Migration: Strategy, Cleansing & Cutover

NetSuite Data Migration Guide: Strategy, Cleansing & Cutover Plan

Executive Summary

Migrating data to NetSuite—a leading cloud-based ERP platform—constitutes one of the most critical and risk-laden phases of any ERP implementation [1] [2]. Careful strategy, rigorous data cleansing, detailed mapping, and a comprehensive cutover plan are essential to avoid costly errors and timeline overruns. Industry reports warn that roughly 40% of ERP projects exceed budget and many fail to deliver expected benefits due to data migration issues [3]. In practice, data migration often accounts for 40–60% of the overall project timeline [4], highlighting its complexity and centrality. Best practices stress that migration planning should begin early, involving cross-functional teams (finance, IT, operations, etc.) and clear executive sponsorship [5] [6].

Key findings include:

- Thorough Planning: Define scope and data requirements up front; migrate only “value-added” data (e.g. current master data, open transactions, recent history) while archiving obsolete records [7] [8]. Establish a detailed migration timeline with milestones for data audit, cleansing, testing, and cutover [9] [10]. Test migrations should be run multiple times (typically 2–3) to validate mappings and data accuracy before go-live [11] [12].

- Data Cleansing and Quality: Perform a comprehensive data audit to identify duplicates, missing or outdated fields, and format inconsistencies [12] [13]. Remove or consolidate duplicate master records and correct or standardize invalid data before migration [14] [15]. Experts emphasize that poor data quality is the #1 cause of migration failures [16] [2]; cleansing issues in a live NetSuite system is far more painful than fixing them upstream.

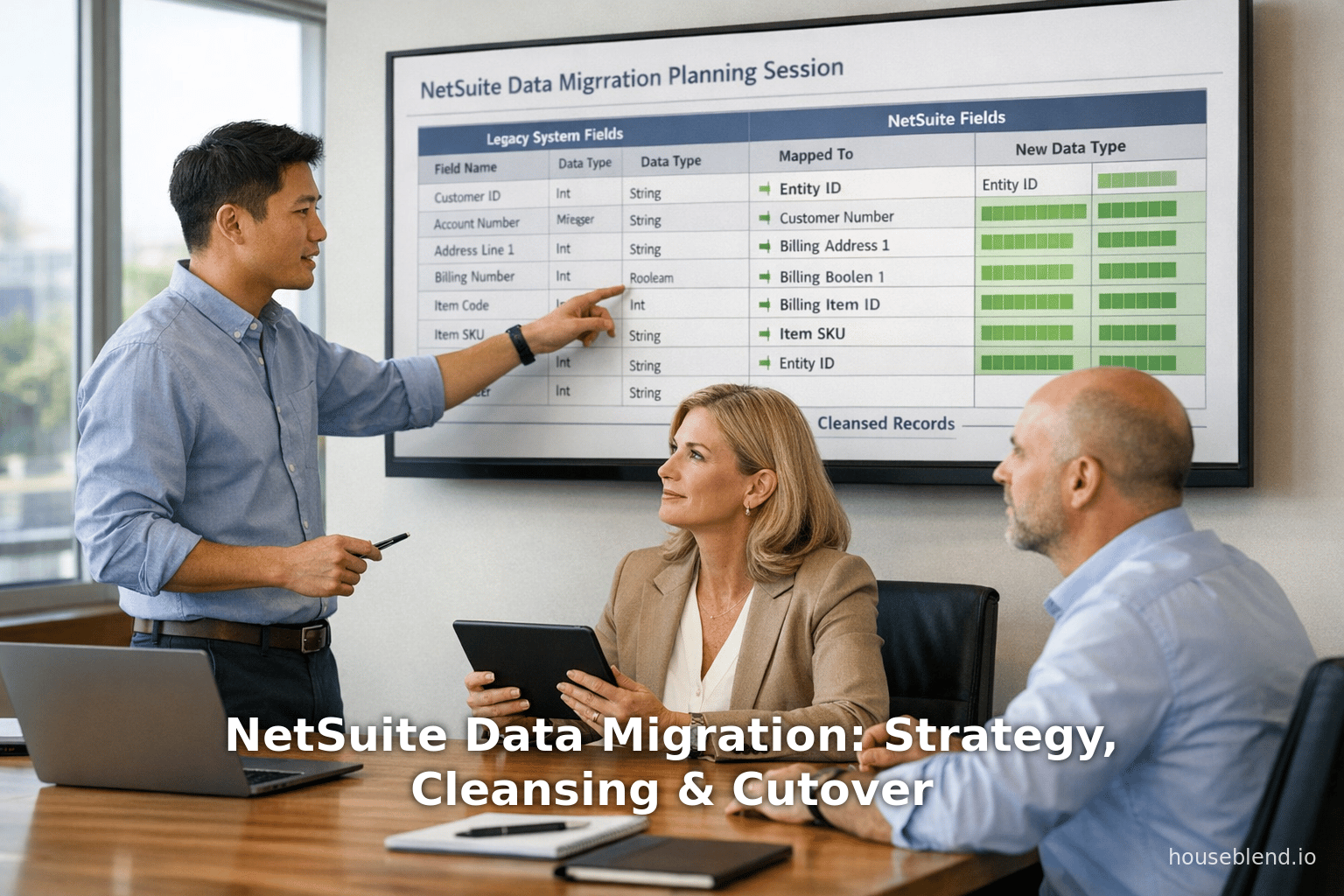

- Data Mapping: Create a detailed field-mapping document that aligns each legacy data attribute to its NetSuite equivalent, noting any required transformations or default values [17] [18]. Use NetSuite-specific constructs (subsidiaries, departments, custom fields, etc.) thoughtfully. Assign external IDs or unique keys to legacy records to simplify linking related entities in NetSuite [19]. Thoroughly document all mapping transformations as an audit trail (“data mapping bible”) [20].

- Migration Execution Tools: Leverage NetSuite’s native CSV Import Assistant for straightforward, small-to-medium batches of standard records, and utilize SuiteTalk (SOAP or REST) APIs or integration platforms for high-volume or complex data loads [21] [22]. For example, the CSV Import Assistant requires no coding and handles common record types easily, while SOAP/REST APIs or middleware support large datasets and custom transformations (albeit with developer effort) [22]. In practice, typical uploads are limited (e.g. ~25K records per CSV by default [23]), so large volumes often require scripted or staged migrations.

- Cutover Planning: Develop a minute-by-minute cutover plan for the final go-live. This plan should freeze the legacy system, extract final data, load into NetSuite, and validate results while delineating fall-back procedures [24] [25]. Essential cutover components include preparation (data freeze, backups, disabling interfaces), execution (system shutdown, data load, reconfiguration), validation (reconciling record counts, balances, and transactions), and rollback triggers if issues arise [24] [25]. A parallel-run or “soft cutover” approach—running both systems in tandem for a short period—can reduce risk by allowing the legacy system to serve as a fallback during validation [26] [9].

- Roles & Governance: Data owners in each business unit must take responsibility for their respective records, verifying accuracy before migration [27] (Source: www.atticus.ph). Project governance should include a cross-functional steering committee to resolve data conflicts and make authoritative decisions on scope and quality [5].Maintaining a single source of truth and strong communication (including notifying external stakeholders about cutover timing) helps ensure business continuity (Source: www.atticus.ph) [28].

This guide synthesizes industry best practices and expert advice to help organizations plan and execute NetSuite data migrations successfully. It draws on vendor white papers, implementation blogs, and case studies to provide evidence-based recommendations and real-world examples. Comprehensive planning, disciplined data cleansing, and rigorous testing are repeatedly cited as the keys to a clean and confident NetSuite go-live [29] [30]. The tables below summarize critical aspects of migration scope and cutover phases, guiding teams through each step of the process.

Introduction

Organizations worldwide are rapidly adopting cloud ERP solutions like NetSuite to consolidate disparate systems into a unified, real-time platform for finance, sales, supply chain, and more [31] (Source: www.anchorgroup.tech). NetSuite, in particular, is one of the largest cloud ERP providers, serving over 37,000 customers globally (Source: www.anchorgroup.tech). The sheer scale and growth of the ERP market underscores the business imperative: the global ERP market is projected to grow from roughly $50.6 billion in 2023 to $123.4 billion by 2032 (about 10.4% CAGR) [32]. This trend reflects increasing recognition that legacy on-premise systems and siloed data inhibit agility and reporting.

However, empirical evidence shows that simply adopting a modern ERP does not guarantee success. For example, a 2026 industry analysis found that 40% of ERP implementations exceed their budgets due to data migration complications, and nearly 30% fail to deliver expected business outcomes because of inadequate data preparation [3]. A Gartner study similarly warns that over 70% of ERP initiatives fail to meet goals, with up to 25% failing outright [2]. In most of these cases, the culprit is poor data quality or migration planning, not the ERP software itself. In one blog, a practitioner notes that without clean data and thorough mapping, even a technically correct import can lead to “inaccurate reports, broken workflows, reconciliation nightmares, and a team that stops trusting the new system” [33].

Data migration thus emerges as the linchpin of a successful NetSuite implementation. It is not a peripheral task — as one Infotech firm bluntly states, “Data migration is not a technical task that runs in parallel with your ‘real’ implementation. It is the implementation.” [34]. In other words, the quality of your data migration effectively determines whether NetSuite becomes a powerful growth engine or a costly liability. This report provides a detailed walkthrough of best practices for migrating data into NetSuite, covering strategy, data cleansing, mapping, tool choices, execution, and cutover planning. Throughout, we emphasize evidence-based recommendations, citing benchmarks (e.g. percentage of records with quality issues [11]), industry research, and learned lessons from real implementations.

Background on NetSuite and Cloud ERP Migrations

NetSuite is a mature cloud-native ERP platform (launched in 1998) that integrates financials, CRM, inventory, and analytics in one suite [31]. Its pure-cloud architecture eliminates on-premises infrastructure, yielding benefits like scalability, global access, and reduced IT maintenance [35] (Source: www.anchorgroup.tech). As enterprises modernize, migrating legacy ERP or homegrown systems to NetSuite promises streamlined processes and better data visibility [31] [36]. For example, moving to NetSuite can enhance reporting accuracy by consolidating data sources and leveraging built-in compliance tools [37].

Yet, legacy systems accumulate technical debt — decades of customizations, duplicate master records, and outdated formats [38] [8]. Organizations often discover that their old data contain numerous errors (missing fields, obsolete entities, mismatched codes) that simply cannot be dumped into a new system unchanged. Consequently, the migration strategy must include not only the mechanics of moving data, but a business-led decision on which data to bring over and how to rationalize it. As one guide emphasizes, “migrating everything” is a costly mistake; instead, focus on data that adds genuine business value and cleanse the rest beforehand [8] [39]. In practice, companies commonly migrate only a few years of recent history (e.g. 1–2 years of invoices) and active master data, while archiving older records externally [7] [40].

Migrating strategic data assets securely is vital. For example, a recent NetSuite migration project for a technology firm required transferring hundreds of thousands of custom records internally, with no third-party services, to protect sensitive financial data [41]. These examples illustrate that data migration planning must balance business needs (completeness of information, compliance) against technical constraints (migration time, data volume) and security requirements.

Data Migration Strategy

A robust data migration strategy encompasses planning the scope, assembling a qualified team, and outlining a clear roadmap. Successful projects invariably spend substantial effort in the pre-migration phase. One in-depth study notes that data migration itself can consume up to 40–60% of the total ERP implementation timeline [4]. Thus, starting early — months in advance of the cutover — is essential to mitigate risks.

Governance, Team, and Planning

First, establish a cross-functional migration team. This should include IT personnel (who understand system architecture and integration), data analysts, and business-domain experts (finance, sales, supply chain) who know the legacy data context [5] [42]. Assign clear responsibilities: for instance, designate a data owner in each department to vet their records, and a project manager to coordinate tasks. Executive sponsorship is critical: senior leaders must support the migration effort by resolving inter-departmental conflicts (e.g. differing definitions of “active customer”) and allocating resources [5] (Source: www.atticus.ph). As one consultant advises, getting cross-departmental buy-in prevents “data conflicts” and keeps the project resourced [5].

The planning phase should enumerate what data will migrate and how. Typical categories include master data (customers, vendors, items, employees), open transactional data (unclosed AR/AP documents, open sales or purchase orders), and essential historical records (e.g. 1–2 years of invoices) [7] [40]. Reference data such as chart of accounts must be included (“account structures, GL codes” [8]), but note that accounting dimensions often need reorganization in NetSuite’s segment-based framework. On the other hand, avoid bringing legacy artifacts that NetSuite will replace or that are no longer needed: legacy customizations, user roles, inactive backups, and obsolete records should generally be left behind [7] [40]. The table below (sourced from industry guidance) summarizes common data categories and priorities:

| Data Category | Specific Records | Migration Priority | Notes |

|---|---|---|---|

| Chart of Accounts | Account structures, GL codes | Must Migrate | Restructure as needed for NetSuite's accounting model [8]. |

| Customer / Vendor Records | Names, addresses, contacts, payment terms | Must Migrate | Verify duplicates; clean contact info and tax IDs [43]. |

| Item Records (Products/Services) | Products, services, pricing | Must Migrate | Align with physical inventory; remove discontinued items [44]. |

| Open Transactions | Invoices, bills, POs, SOs | Must Migrate | Essential for financial continuity; bring forward open balances [45]. |

| Financial Balances | Opening balances, GL balances | Must Migrate | Migrate as initial journal entries [45]. |

| Employees / HR Data | Employee profiles, pay rates, roles | Must Migrate | Needed for workflows, permissions [46]. |

| Historical Transactions | Older invoices, orders, payments | Conditional | Often limit to 1–2 years; archive older history [47]. |

| Notes & Attachments | Supporting documents, file notes | Conditional | Prioritize key docs; archive others externally [48]. |

| Legacy Customizations | Custom workflows, scripts, roles | Do Not Migrate | Rebuild rather than carry forward; NetSuite has new framework [7] [49]. |

(Adapted from EPIQ and NetSuite best-practice guides [8] [7].)

Using this scoped approach prevents “replicating technical debt” in the new system [8]. For instance, legacy data audits often uncover 20–40% of records with quality issues [11]; blindly migrating all of them would propagate those problems. Instead, plan to archive irrelevant or stale data in offline storage or legacy read-only systems, especially if retention regulations (e.g. tax or audit rules) do not require active reference [50] [40].

Timeline and Phasing

Construct a detailed migration timeline with milestones. Most mid-sized migrations allocate 8–12 weeks for the data migration effort itself [10]. This window typically includes: data audit & cleansing, building migration templates or scripts, dry-run loads, validation, and the final cutover. Industry benchmarks suggest multiple test loads (often 2–3 full runs) well before go-live [51]. Additionally, allow for a parallel operational period post-migration (for example, running the new and old system side-by-side for 2–8 weeks) to validate that daily transactions and closing processes can complete smoothly [52].

Regarding approach, decide between a “big-bang” cutover vs. a phased migration. A big-bang (hard) cutover means retiring the legacy system in one step and moving all production transactions to NetSuite at once. This is clean but can be higher risk if data issues emerge post-switch. A phased or “parallel” approach transitions modules in stages (for example, migrate master data first, then historical financials, then open transactions near go-live) [9]. It effectively overlaps systems: the business may enter new transactions in NetSuite while still using the legacy system to finalize closing activities [25]. Parallel runs (soft cutovers) give a safety net: as one consultant notes, although parallel operation requires duplicate entries briefly, it “reduces risk” by keeping the old system available as a fallback during validation [26]. The choice depends on factors like the company’s comfort with new software, audit requirements for running side-by-side reporting, and the clean state of legacy data [26] [9]. In practice, large enterprises often prefer phased migrations to minimize downtime and validate system integrity (with e.g. at least one full month-end close performed in parallel) [26].

Example: A NetSuite implementation blog recommends explicitly deciding on cutover method during planning. For example, migrating from QuickBooks to NetSuite could use a hard cutover (stop QuickBooks Friday, start NetSuite Monday) if mid-month [25]. Alternatively, for complex operations requiring extreme caution, the team may continue using QuickBooks to close books while NetSuite handles new entries for a short period [53]. They stress there is no one-size-fits-all answer; the decision should factor in data readiness and business cycle timing [53].

Roles and Communication

Define clear roles: Identify a Data Migration Lead (often a project manager or data architect) to own the overall migration process, and Data Owners in each functional domain to oversee validation of their data sets [54] (Source: www.atticus.ph). The cutover plan in particular requires many hands: IT (for technical loads and backups), finance (for reconciliation and closing), business stakeholders (for sign-off on data accuracy), and communications (to inform all users of downtime). One blog warns that “during cutover, your technical leads, finance team, and business stakeholders all need to operate in tight coordination” [55]. Therefore, a project-level RACI chart (Responsible/Accountable/Consulted/Informed) should drive communication and accountability. Notify any external parties with system interfaces (e.g. vendors, integrators, auditors) about the migration schedule and planned downtime [28].

Leadership should also set data quality goals upfront—e.g. acceptable duplicate rates, error thresholds, or completeness metrics [29]. By establishing these criteria early (sometimes called a “data quality acceptance criteria”), the team knows when the source data is sufficiently clean to proceed [29]. This combats the common fate of migrating “dirty” data: as one guide puts it, “garbage in, garbage out”—allowing dirty legacy records into NetSuite will only transfer problems [56].

Key Phases of Cutover (Illustrative)

A successful go-live requires a precise cutover sequence. The figure below outlines typical layers and activities, adapted from industry experts [24]:

| Cutover Phase | Key Activities |

|---|---|

| Pre-Cutover Preparation | Freeze legacy data, take full backups, run final data extracts, disable integrations and user edits [24]. Confirm all test migrations have passed. Prepare cutover scripts and rollback procedures. |

| Execution Sequence | Shut down the legacy system, load data into NetSuite (in the agreed order), run data load jobs, run any required configuration scripts. Re-enable integrations (e.g. payment gateways) once relevant data is in place [24]. |

| Validation Checkpoints | Reconcile totals: count records, verify financial balances, test open transactions (e.g. verify open AR/AP invoices match). Perform sample business processes (e.g. create a sales order). Obtain user acceptance sign-off from finance and key users [24]. |

| Rollback Procedures | If critical errors occur, activate rollback plan. This involves restoring data from backups and notifying users. Communication templates are often prepared in advance to inform stakeholders of any rollback action [24]. |

Table 1: Core layers of a data migration cutover plan [24].

Data Cleansing & Preparation

Data cleansing is arguably the most labor-intensive part of migration, but also the most valuable for ensuring success. Multiple sources stress that legacy data audits should begin early and run in parallel with system configuration.

Data Quality Audit

Start with a comprehensive data profiling of the source system. Analyze the volume and scope of each data domain: count records (customers, items, transactions), identify date ranges, and evaluate historical data retention needs [11]. Crucially, quantify data quality issues. For example, audits typically find 20–40% of records with some flaw (duplicates, missing fields, invalid values) [11]. Questions to answer include: Which customers have no associated transactions? How many vendors lack tax IDs? Are there GL accounts with unreconciled balances? Identifying these steers cleaning priorities.

Cleaning Duplicate and Obsolete Records

One of the first tasks is deduplication of master records. Over years of manual entry and siloed systems, it is common to accumulate duplicate customer or item entries. The migration plan should include merging or purging duplicates before the load [14]. For instance, EPIQ consultants instruct: “Remove all duplicate customer, vendor, and item records before extraction.” [57]. Specialized tools or even spreadsheet formulas can aid match-and-merge processes, but often this work requires human validation (e.g. confirming which of two “Acme Corp” entries is the real one). Data cleansing firms frequently note that matching algorithms can resolve many duplicates, but final decisions depend on business rules (like which customer ID is active).

Withdraw or flag obsolete records at this stage. Houseblend’s guide advises identifying and excluding inactive customers or items (for example, products not sold in the last 2–3 years) [29] [42]. Many companies archive these entities in legacy systems or export them to reporting databases, preserving audit history without clogging the new ERP.

Standardizing and Validating Data

Standardization of formats is another critical step. Legacy data may have inconsistent date formats, address styles, phone number formats, or currency codes. Address data (street vs. PO box conventions) and regional tax fields often require harmonization. The EPIQ guide outlines steps to “standardize formats for dates, phone numbers, country codes, and currencies… to prevent import failures” [58]. Likewise, ensure all required fields in NetSuite have valid values in source data (e.g. customer payment terms, item units of measure), even if you plan to migrate the minimal necessary. Missing required fields should be populated with defaults or closest valid substitutes during cleansing.

Validate referential integrity before migrating transactions. That is, confirm that any reference fields (e.g. a sales order’s customer ID) actually exist in the master data being migrated. EPIQ cautions: “Validate that all referenced records exist before importing transactions. Load data in the correct sequence to avoid failures.” [59]. A practical approach is to first load master data objects (customers, items, accounts), then open transactions (orders, invoices). For example, do not load an invoice until its customer and item records are already present in NetSuite.

Ownership and Governance of Data

Assign data owners within the business to oversee each dataset’s quality. These might be functional leads in finance, sales, procurement, etc. Data owners should rigorously review cleansed data for anomalies before final extraction. The Infotech guide, for instance, recommends explicitly assigning data ownership in cleansing steps [54]. This delegation ensures that subject-matter experts confirm the validity of financial opening balances, the completeness of active customer lists, and so forth. It also spreads the workload: instead of IT alone deciding how to merge thousands of duplicate records, each department vets its domain.

Consequences of Poor Data and Benefits of Cleaning

The risks of inadequate cleansing are severe. Dirty data can lead to cascading failures: duplicate or missing records may break downstream processes, reports can be materially incorrect, and user trust in the new ERP plummets if they frequently encounter errors [15] [60]. Conversely, the payoff for doing cleansing well is high. Many experts note that fixing data issues during the project yields the highest ROI [61]. Indeed, technical analyses indicate that post-go-live fixes cost 3–5 times more than addressing issues during migration [62]. Clean, accurate data emerging in NetSuite enables on-day-one confidence — financial reports tie out, workflows function immediately, and users have one “single source of truth” to trust [63].

Data Mapping and Transformation

Mapping defines how legacy data will appear in NetSuite. This process requires meticulous attention to field-level details. As one expert memo states, “A mapping that seems obvious on the surface often reveals complexity underneath — formatting differences, character limits, and NetSuite-specific structures that do not exist in legacy systems.” [17].

Field Mapping Documentation

Create a formal mapping document (often a spreadsheet) that lists each source field and the target NetSuite field, along with any transformations. Include sample values and whether the field is required. For instance, map “Cust_Num” in the old CRM to “External ID” in NetSuite for the Customer record, noting that leading zeros must be preserved (or stripped) [17]. Where legacy systems use flat text fields for category (e.g. “Dept: Sales”), map these into NetSuite’s native Class or Department fields as appropriate, possibly splitting or reassigning values.

NetSuite introduces constructs like OneWorld subsidiaries, department, class, and location hierarchies that many legacy systems lack [64]. These require additional mapping logic. For example, a company might decide to map legacy business units to subsidiaries, and enter the subsidiary (which is mandatory in OneWorld) based on country of origin. Open discussions with finance teams are crucial here. As EPIQ notes, chart-of-accounts mapping is “business-critical work… requiring collaboration with finance teams to ensure accurate reporting” [65].

Transformation Rules and External IDs

Apply necessary data transformations. For example, if legacy amounts used a comma decimal separator, convert them to a period; standardize yes/no to Y/N, etc. Leverage NetSuite’s transformation tools, SuiteScript, or middleware as needed. One guide suggests using SuiteScript when custom logic is required, or a middleware tool when many-to-many conversions are needed [18]. Regardless of method, always perform trial transformations on a small dataset and validate results [18].

Use an External ID (unique key) for records where possible. External IDs serve as persistent links between legacy and NetSuite records. For example, if legacy customer numbers are meaningful, store them in NetSuite’s “External ID” field. EPIQ recommends this: “External IDs create a persistent link between legacy records and NetSuite records. They are critical for linking related records and simplifying post-migration updates.” [19]. This technique helps when importing transactions: you can reference the External ID of a customer or item instead of needing the NetSuite internal ID.

Testing and Documentation

Compile all mapping and transformation rules into documentation. Houseblend advises maintaining a “data mapping bible” that records every mapping decision and logic [20]. This document is critical for audit purposes, troubleshooting, and future reference. For instance, if three years later someone asks why certain sales orders did not migrate, the mapping bible should explain if fields were intentionally dropped or reclassified. Documenting also makes it easier to onboard consultants or new team members who join mid-project.

Run multiple mapping tests before full-scale loading. The process typically involves:

- Load a small “seed” set of master records into a sandbox of NetSuite.

- Run a corresponding small transaction load referencing those masters.

- Verify data relationships and accuracy (e.g. does the “April” invoice appear in the right GL account and for the correct customer?). Houseblend and EPIQ both stress the importance of immediate validation: check a few records through the entire pipeline to confirm that dates, links, and totals align with expectations [18] [20].

By systematically auditing, cleaning, and mapping data in advance, teams dramatically reduce the likelihood of nasty surprises at go-live. NetSuite migration specialists unanimously advise: do not postpone data quality fixes until after cutover. As EPIQ summarizes, “cleaning [dirty data] in a live NetSuite environment is exponentially more painful than cleaning it in advance” [60].

Data Migration Execution Tools and Methods

NetSuite provides several mechanisms for importing data, and choosing the right tool for each task is key to an efficient migration.

NetSuite’s Native CSV Import Assistant

The most common starting point is NetSuite’s CSV Import Assistant [21]. This is a wizard-driven interface (Setup > Import/Export > Import CSV Files) that supports importing most NetSuite record types (customers, items, transactions, etc.) via CSV files [66]. It allows you to save an import job and reuse field mappings. The CSV import is ideal for small to medium datasets with standard structures: it requires no coding and can handle straightforward field-to-field imports. According to best practice guides, it works well for up to a few thousand records at a time [67].

However, CSV import has limitations: it can be slow for very large volumes and does not support complex transformations. Large transactional imports (thousands of rows) may time out or converge slowly. Official documentation notes that, for performance, transactions with over 1,000 lines may be problematic [68]. In reality, most NetSuite accounts impose a 25,000-line limit per CSV upload (as VNMT consultants observed) [23]. To import, say, 100,000 sales orders, you would need to split into multiple files or use an API approach.

SuiteTalk SOAP/REST APIs

For bulk or ongoing migrations, NetSuite’s SuiteTalk SOAP or REST Web Services are often the best choice [69] [70]. These APIs let you script the import process with full control and can handle large or hierarchical datasets more reliably. SuiteTalk SOAP is well-suited for heavy loads and complex record relationships (join transactions to multiple line items, etc.) [70]. The trade-off is that it requires developer effort to write the integration code or scripts, and each call is subject to governance limits (API usage quotas) [70].

SuiteTalk REST (NV1 REST Web Services), available in recent NetSuite versions, provides a more modern, potentially faster alternative. It is optimized for real-time syncing and works well for up-to-date integrations [71]. However, as [53] notes, REST has “limited record support,” meaning some record types or complex datasets still need SOAP or CSV. In either case, leveraging APIs typically uses external scripts or tools that handle batching.

Integration Platforms & Third-Party Tools

Many organizations use iPaaS/integration platforms such as Boomi, Celigo, or MuleSoft to manage NetSuite migrations [72] [73]. These platforms provide visual interfaces for mapping and data flow, and often come with pre-built connectors for NetSuite. They are especially useful when migrating data across multiple systems (e.g. from an old on-prem ERP and a CRM simultaneously) or when orchestrating a sequence of loads. In the tools table from EPIQ, “Celigo / Boomi / MuleSoft” is noted as best for complex integrations and reusable workflows [73], though it entails licensing cost and setup complexity.

NetSuite SuiteScript

NetSuite’s custom scripting (SuiteScript) can also be used to automate imports. SuiteScript 2.0 provides APIs (like task.CsvImportTask or direct record APIs) to load data programmatically [74]. This approach is flexible (you can add custom validation or transformation logic mid-flight) and can be scheduled. The EPIQ table notes that SuiteScript is great for automated imports and scheduled jobs [75], but is subject to script governance limits and requires development skill. For example, a script could parse a CSV file, validate each row, and insert records one by one. This is often used for incremental updates or to fill data that the CSV assistant can’t handle (like static lists after go-live).

Tool Selection Guidelines

Choosing the right tool depends on data size and complexity. As EPIQ advises: “Choosing the right tool for each data type and volume is critical — the wrong tool choice can turn a smooth migration into a weeks-long ordeal” [76]. For instance, using CSV import for a 200,000-record volume could lead to timeouts, whereas a scripted SOAP import could handle it in fewer, larger batches. Indeed, in one case study, a consulting partner built a custom script to allow uploading 200,000 records at once, bypassing NetSuite’s 25,000 limit [23]. Thus, plan your approach: start with CSV for small tables (lists of 50–100 fields) and move to API or scripted methods for very large or relational data.

Finally, keep logs of all migration runs. Track which source file corresponds to which load job, and save import results. NetSuite provides status pages for CSV imports, which should be monitored and logged off-system. Any errors (e.g. record rejections) should be fixed in the source data and retried. A best practice is to test each import on a sandbox or test account first, then move the process to production with confidence [18] [17].

Migration Testing and Validation

Testing is iterative throughout the migration process, but especially critical in the final cutover rehearsal and post-load verification.

Dry-Run Migrations

Conduct multiple test migrations into a non-production environment (e.g. a NetSuite Sandbox or Beta) that mimics the production configuration. According to one migration guide, plan 2–3 full test cycles [77]. Each cycle should cover a representative dataset (samples of master records, transactions) and follow the entire process (extract → transform → load → validate). After each test load, run reconciliation reports. Key checks include:

- Record counts: Compare the number of records in source vs NetSuite (e.g. total customers, total invoices).

- Financial balances: Ensure that the general ledger balance (in total and by account) in NetSuite matches the source system's closing balances [78].

- Open Transactions: Verify that all open AR and AP items have imported correctly and their totals match the source report [79].

- Integration Flows: If any interfaces exist (e.g. bank feed, e-commerce connection), test those end-to-end in the test environment.

Each test pass will generate errors or mismatches that the team should document and correct. The cost of ignoring test failures is high: often, errors not found in test go-live result in costly post-live disruptions [80] [62]. The TopDynamics study even notes that resolving a data issue after go-live can cost 3–5× more than fixing it pre-launch [62].

Data Reconciliation and Sign-Off

After each test or the final migration, cross-functional validation is needed. For example, finance teams should sign off on trial balance comparisons, while operations may verify inventory counts. Involving end-users early in checking migrated data (e.g. “Does customer A have the correct address?”) helps catch subtle issues. One expert advises scheduling a meeting (or set of meetings) for users to “validate accuracy with teams and stabilize system performance” [16]. Only once all key stakeholders confirm that the migrated data is accurate and complete should the cutoff window be finalized.

A sample checklist might include:

- GL account mapping is correct and trial balances match.

- Open invoices and payments align with sub-ledger totals.

- Customer and vendor master lists have no glaring duplicates.

- Items have the correct unit-of-measure and category.

- Sample sales/purchase orders can be created and flow correctly.

Any discrepancies should trigger either data fixes or corrections in mapping logic, followed by re-running the import for affected records. Document every validation outcome.

Post-Migration Parallel Run (if applicable)

If a parallel-run approach is chosen, the post-migration validation extends over a period (weeks to months). During this phase, continue to reconcile NetSuite and legacy system outputs. For example, one or two complete month-end closes might be executed using both systems in parallel to ensure the NetSuite results can stand alone. The LinkedIn example features a consultant who always recommends a parallel-run (soft cutover), noting that performing at least one month-end close in both systems before fully switching ensures “stability and data integrity” [26]. This extra precautionary step can catch issues that a single cutover might overlook.

At the end of validation, formally sign off the migration data as “certified” for production use. This might involve a final approval document signed by department heads or the project steering committee. Afterwards, legacy data is typically archived, and the NetSuite data set becomes the sole authority going forward.

Cutover Execution and Post-Migration Validation

The actual cutover weekend or go-live period is the culmination of all preparation. The detailed cutover plan (Table 1) is now executed. Key elements include:

- Data Freeze: On the scheduled date, halt all updates to the legacy system. This ensures the final snapshot is consistent. The plan must have enumerated at which exact time (e.g. 5 pm Friday) the cutover begins [24].

- Final Extracts: Export the latest data (customers, items, open transactions, etc.) as defined. Confirm that extracts match expected totals (e.g. record counts).

- System Backup: Take a full backup of the legacy production database just prior to migration (as Houseblend notes, this safeguards against data loss if rollback is needed [28]).

- Data Load: Perform the data import into the live NetSuite instance, following the earlier-tested sequence. For bulk imports, monitor the progress and check for any error messages. If there are failures (due to data anomalies or mapping issues), pause and address them immediately if possible.

- Enable Integrations: Once core data is loaded, turn on external integrations/interfaces in the proper order. For example, re-enable any electronic banking import, credit card processing feeds, or third-party applications. Do not enable them until prerequisite data (customers, items) exist.

- Smoke Testing: Have key users and IT run a pre-defined set of business-critical operations (e.g. generate a sales order and an invoice, post a payment, run a tax report) to verify basic system function.

- Validation: Execute the reconciliations as described in the testing section. This might involve running financial reports (trial balance, aging, inventory valuation) and confirming figures match the legacy ending figures [78] [79].

- Go/No-Go Decision: If validation is good, declare the cutover a success and allow normal business operations to resume on NetSuite. If serious issues are found, the rollback plan is enacted: restore the legacy system from backup and delay go-live.

A clean, well-validated cutover yields a “clean state” in the new system on day one. Users can immediately do new transactions with confidence. Conversely, a chaotic cutover (muddled downloads, errors not caught) can cause business disruptions and erode trust in the ERP. CFOs and controllers particularly scrutinize the cutover; executives need assurance that the final financials will close correctly. As one blog emphasizes, a cutover plan must account for the finance team’s checkpoints to protect ROI [81].

Case Studies and Examples

Real-world NetSuite migration projects illustrate these principles in action:

- Land O’Lakes (Food & Agriculture): An Atticus case study notes that the cooperative carefully planned and executed the migration of legacy financial data, ensuring “all critical data was accurately transferred and that there was no disruption to business” (Source: www.atticus.ph). Their example highlights the emphasis on precision and continuity.

- Green Rabbit (Tech Startup): A rapidly growing startup in delivery, Green Rabbit, attributes their NetSuite success to extensive preparation. They spent “several months preparing” by mapping processes and identifying improvement areas, which allowed them to “hit the ground running” when NetSuite went live (Source: www.atticus.ph). This underscores the value of upfront planning and process alignment.

- GoPro (Manufacturing): GoPro’s implementation lesson was the importance of customization and alignment. They “worked closely with the NetSuite team to customize the system to their specific needs, including integrating with existing systems and workflows” (Source: www.atticus.ph). In other words, migration isn’t just data — it’s also about tailoring NetSuite to fit the business, which affects how data fields and processes are configured.

- Rapid Data Migration (Legendary Case): In one example from VNMT Consulting, a client needed to bulk-migrate hundreds of thousands of custom records without third-party involvement for security reasons [41]. The consultants developed native NetSuite scripts enabling up to 200,000 records at once (far exceeding the native 25K cap) [23]. This solution ensured high data security and performance, demonstrating how technical ingenuity can solve scale challenges.

- General Survey Insights: Analysts have found that even after going live, companies often come back to clean up data. LinkedIn posts emphasize that teams facing ERP cutovers that were rushed (with leftover dirty data) pay the price in user adoption and report accuracy [15]. Conversely, implementations that built in thorough data audit phases report smoother transitions and higher ROI.

These case examples all reinforce the same themes: plan thoroughly, clean data rigorously, and validate diligently. They also show that success can be achieved across industries (manufacturing, food, tech startups) by following a disciplined methodology.

Future Directions and Implications

Looking ahead, the emphasis on data quality and seamless migration will only intensify. As AI and machine learning tools mature, we can expect data profiling and cleaning to become more automated (e.g. algorithms to detect duplicates or suggest mappings) [82] [83]. For example, some vendors are developing AI-powered “migration engines” that attempt to pre-map fields or flag anomalies [82]. Organizations should stay abreast of such tools, although they must still validate outputs carefully.

The move to cloud ERP and integrated platforms (ERP + CRM + Commerce) means future migrations will often span multiple applications. Thus, modern migrations will likely use robust iPaaS solutions and perhaps multi-stream cutovers (e.g. migrating subscription billing in parallel with finance) [84] [73]. Data governance practices (master data management, data governance councils) will increasingly be applied to avoid repeating past mistakes in the next migration cycle.

Finally, the COVID-era acceleration of digital transformation has raised expectations that ERP implementations deliver immediate value. Consequently, project sponsors will demand clear metrics of data accuracy and project discipline. The “critical success factor” is avoiding the trap of deferring data issues, because as studies show, addressing problems late can quadruple costs [62]. Clean data migrations enable faster user adoption and better attainment of ERP benefits (improved reporting cycles, better decision-making) (Source: www.anchorgroup.tech) [15].

In sum, robust data migration practices are fundamental to leveraging NetSuite’s potential. While the specifics can vary by organization, the underlying principles remain consistent. Teams that invest the time to cleanse, map, and test data thoroughly reap dividends: minimized downtime, confident go-live, and software that truly transforms operations.

Conclusion

Data migration to NetSuite is a complex, high-stakes undertaking—and one that demands meticulous attention to planning and data quality. This report has shown that a successful migration strategy rests on several pillars: defining a realistic migration scope, assembling the right team and governance, rigorously cleansing and mapping data, leveraging appropriate tools, and executing a tightly-controlled cutover. Each of these steps should be validated by data: tests, reconciliations, and business sign-offs.

When done correctly, NetSuite data migration becomes the launchpad for digital transformation. Accurate, well-structured data allows organizations to capitalize on NetSuite’s cloud capabilities from day one. Vice versa, skipping steps can leave organizations with broken reports and frustrated users. As one implementation firm summarizes it: the quality of your data migration “determines whether NetSuite becomes an engine for growth or an expensive liability.” [34].

Given how prevalent data issues are in ERP projects, following the best practices outlined here is not optional but essential. In practice, that means allocating sufficient time (often several months) to data assessment and cleansing, involving business stakeholders in every phase, and never underestimating the cutover transition. The myriad citations in this report—from industry analyses to consultant case studies—underscore a universal truth: the most successful NetSuite go-lives are those where data migration was treated not as an afterthought, but as the core of the implementation. By adhering to a disciplined strategy, organizations can ensure that their NetSuite deployment starts on a rock-solid data foundation, paving the way for long-term operational and financial benefits [61] (Source: www.atticus.ph).

External Sources

About Houseblend

HouseBlend.io is a specialist NetSuite™ consultancy built for organizations that want ERP and integration projects to accelerate growth—not slow it down. Founded in Montréal in 2019, the firm has become a trusted partner for venture-backed scale-ups and global mid-market enterprises that rely on mission-critical data flows across commerce, finance and operations. HouseBlend’s mandate is simple: blend proven business process design with deep technical execution so that clients unlock the full potential of NetSuite while maintaining the agility that first made them successful.

Much of that momentum comes from founder and Managing Partner Nicolas Bean, a former Olympic-level athlete and 15-year NetSuite veteran. Bean holds a bachelor’s degree in Industrial Engineering from École Polytechnique de Montréal and is triple-certified as a NetSuite ERP Consultant, Administrator and SuiteAnalytics User. His résumé includes four end-to-end corporate turnarounds—two of them M&A exits—giving him a rare ability to translate boardroom strategy into line-of-business realities. Clients frequently cite his direct, “coach-style” leadership for keeping programs on time, on budget and firmly aligned to ROI.

End-to-end NetSuite delivery. HouseBlend’s core practice covers the full ERP life-cycle: readiness assessments, Solution Design Documents, agile implementation sprints, remediation of legacy customisations, data migration, user training and post-go-live hyper-care. Integration work is conducted by in-house developers certified on SuiteScript, SuiteTalk and RESTlets, ensuring that Shopify, Amazon, Salesforce, HubSpot and more than 100 other SaaS endpoints exchange data with NetSuite in real time. The goal is a single source of truth that collapses manual reconciliation and unlocks enterprise-wide analytics.

Managed Application Services (MAS). Once live, clients can outsource day-to-day NetSuite and Celigo® administration to HouseBlend’s MAS pod. The service delivers proactive monitoring, release-cycle regression testing, dashboard and report tuning, and 24 × 5 functional support—at a predictable monthly rate. By combining fractional architects with on-demand developers, MAS gives CFOs a scalable alternative to hiring an internal team, while guaranteeing that new NetSuite features (e.g., OAuth 2.0, AI-driven insights) are adopted securely and on schedule.

Vertical focus on digital-first brands. Although HouseBlend is platform-agnostic, the firm has carved out a reputation among e-commerce operators who run omnichannel storefronts on Shopify, BigCommerce or Amazon FBA. For these clients, the team frequently layers Celigo’s iPaaS connectors onto NetSuite to automate fulfilment, 3PL inventory sync and revenue recognition—removing the swivel-chair work that throttles scale. An in-house R&D group also publishes “blend recipes” via the company blog, sharing optimisation playbooks and KPIs that cut time-to-value for repeatable use-cases.

Methodology and culture. Projects follow a “many touch-points, zero surprises” cadence: weekly executive stand-ups, sprint demos every ten business days, and a living RAID log that keeps risk, assumptions, issues and dependencies transparent to all stakeholders. Internally, consultants pursue ongoing certification tracks and pair with senior architects in a deliberate mentorship model that sustains institutional knowledge. The result is a delivery organisation that can flex from tactical quick-wins to multi-year transformation roadmaps without compromising quality.

Why it matters. In a market where ERP initiatives have historically been synonymous with cost overruns, HouseBlend is reframing NetSuite as a growth asset. Whether preparing a VC-backed retailer for its next funding round or rationalising processes after acquisition, the firm delivers the technical depth, operational discipline and business empathy required to make complex integrations invisible—and powerful—for the people who depend on them every day.

DISCLAIMER

This document is provided for informational purposes only. No representations or warranties are made regarding the accuracy, completeness, or reliability of its contents. Any use of this information is at your own risk. Houseblend shall not be liable for any damages arising from the use of this document. This content may include material generated with assistance from artificial intelligence tools, which may contain errors or inaccuracies. Readers should verify critical information independently. All product names, trademarks, and registered trademarks mentioned are property of their respective owners and are used for identification purposes only. Use of these names does not imply endorsement. This document does not constitute professional or legal advice. For specific guidance related to your needs, please consult qualified professionals.